News

In the News

Dongkuan (DK) Xu Receives Dean’s Applied AI Research Accelerator Award

Yang Receives Goldwater Scholarship

Spring 2026 Senior Design Center’s Posters and Pies

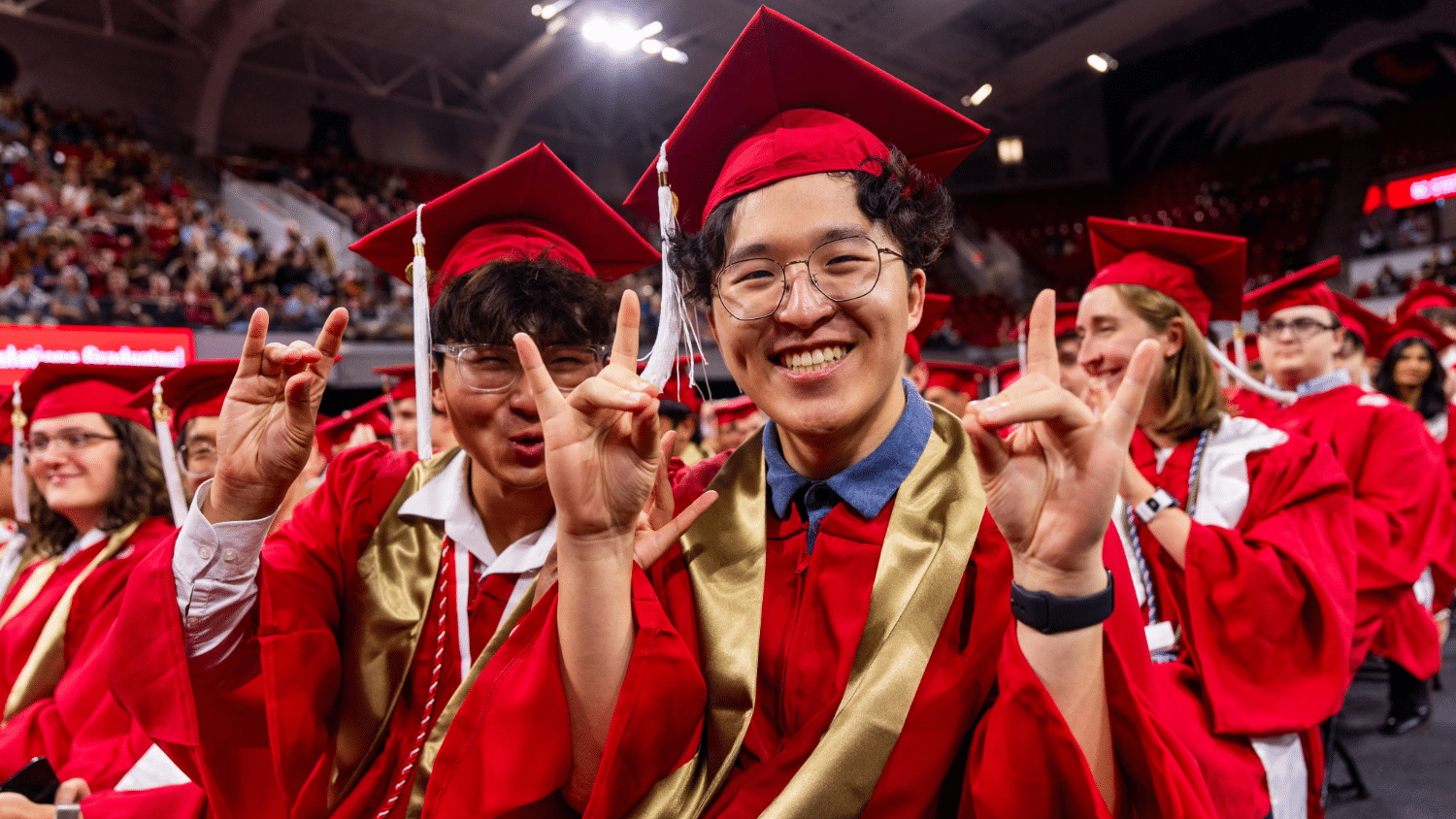

Congratulations to our Spring 2026 Graduates!

Welcoming Department of Computer Science Head Daphne Yao

Interns Tackle Ag Challenges With the N.C. PSI Makerspace

Researchers Propose a New Inspection Method to Improve Online Collaboration Platforms

Three NC State Students Receive Goldwater Scholarships

Shahnewaz Leon, Sandeep Kuttal Receive Honorable Mention at ACM CHI

Jamie Jennings Wins PEP Award

Shandler Mason: Human-Centered Software Engineering